Down scoping tokens and permission attenuation

This article discusses the potential challenges with running multiple services in environments with different levels of trust. It also discusses different solutions to:

- Untrusted environments

- Sharing access tokens without sharing permissions

- Changing access token permissions

Background

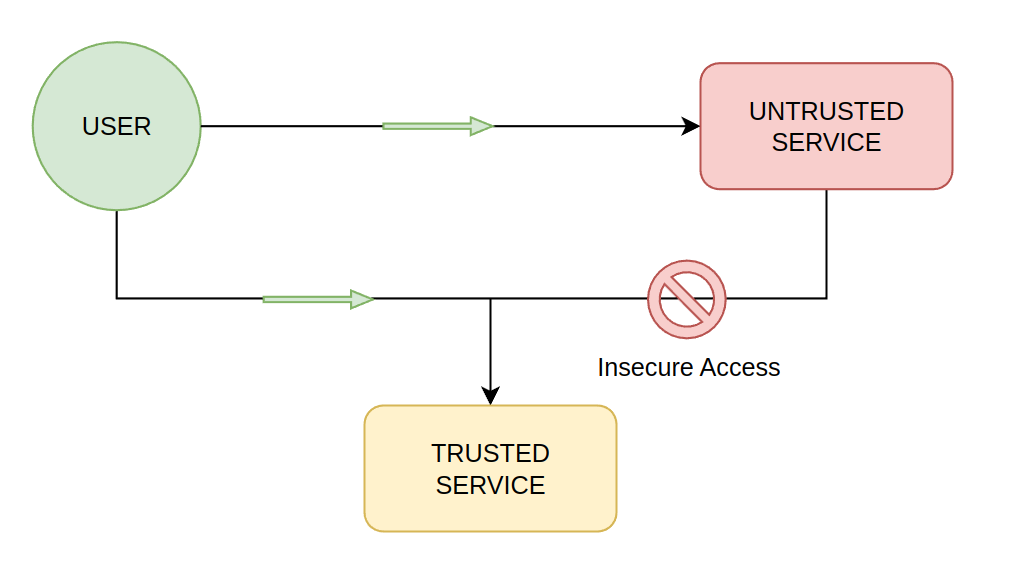

In large enough software deployments, it is inevitable that services in different levels of trust will communicate with each other. With Authress or any other authentication provider this usually means that tokens from one service are sent to another service. This creates an opportunity for a service with a lower level of trust to abuse:

Since there is only one user token, as they only have one identity, that token represents all their access. Presenting that token at a service either the untrusted service or the trusted service grants them access to that service.

The problem is that the Untrusted Service can use that user's token to access to the trusted service. This is independent of malicious attackers, a more concrete example would be two services with in your company that might have different data. One service could be a payments/orders service which could have PCI credit card data, and the other could be a service for handling the UI such as a Search service. Both services are part of your platform, but one of these services should not call the other one.

Canonical JWT Access control

There are many different types of access control patterns, roles in JWTs, attributes, policies, a comprehensive list and a comparison of them is available in the Academy article on Access control strategies.

The most common mistake is putting the roles or permissions into the JWT.

There are a number of problems with doing so and below we'll investigate each one.

1. User access updates

As the article on access control strategies highlights, a user's access will change over time, but their identity almost never will. When permissions related information is placed in the access token, every time the access changes, the user needs to fetch a new one. To actually receive a new token the user must revisit your login flow. This is extremely costly, since authentication is a very complex process. Additionally, there is a cost to actually identify when the user's access should change. That is also not a simple problem. When the user's access changes, the UI the user is logged into would need to identify that problem and force the user to log in again.

Not only is that a bad experience, it's counter to the value of access tokens.

The result of the authentication process needs to be something that will not change. The user doesn't change, nor does their identity change, so their access token should not change either.

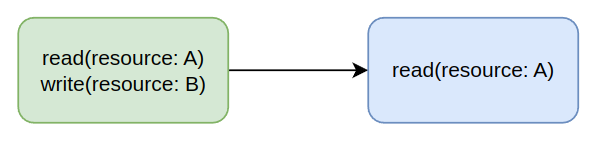

2. Directly attenuating the token

Instead of directly changing the token, we could opt for generating a new second token that has limited scopes, permissions, access, claims, etc... This is still better than logging the user in again, but causes other problems. There are two ways this can be accomplished, either online or offline. The online flow creates the ability for token holders (such as the user) to exchange their existing token directly for a new one with more limited access. The offline flow creates the ability for the holder to directly generate a new token.

The problems with the offline approach require the generation of complex tokens that actually enable attenuation. For instance there is no secure way to attenuate JWTs you have to completely switch to a different standard. These do exist such as Biscuits, but cause equally bad problems as mentioned elsewhere in this article. Further since they commonly used, the technology and community around them limits the tooling and increases the complexity of any system. If a system is large enough to get value from a non-JWT strategy, then it also is large enough to need more nuances than existing tooling can provide.

On the other hand, online attenuation can happen with JWTs, but requires multiple operations over the network. Each of these operations is non-cacheable and usually requires proliferation of the available access tokens. Further, for every API call you make you might want to scope down the permissions in the token. So we've added an extra API call to every request. However this is still better than the offline case, since you can directly control your needs through your preferred interface using tools that offer simplicity.

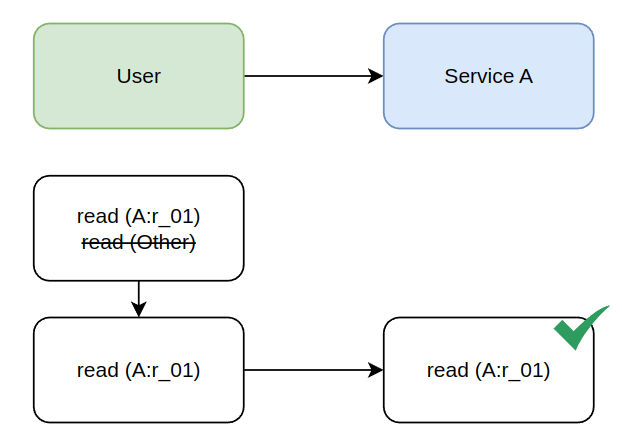

3. A priori knowledge of required access

In systems with multiple software components, there is no way for us know to know with any accuracy what those new permissions are. While it seems great on the surface to say, Service A is calling Service B, the token requires permissions for Service B, what exactly are those permissions? Does every attenuation just guess on what those permissions are? And what happens when Service B starts to call service C. Now Service A needs to know the internal implementation detail that B => C so that when it attenuates the token it also includes permissions for C.

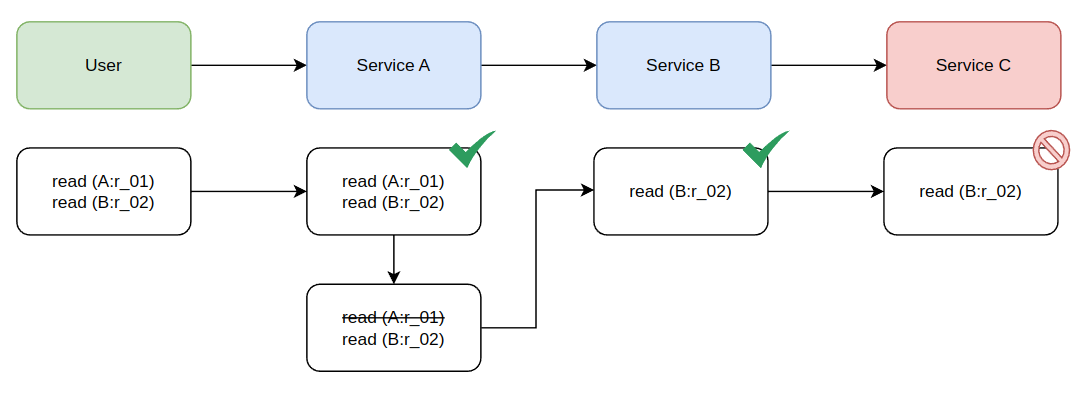

4. Avoiding product regressions

Since Service A should never know about the implementation detail that B => C, this token will be blocked when it gets to C. We can retroactively update the permissions we are attenuating as to leave Access to Service C in the token. Attenuating the token as we've seen in the previous section will cause a bad developer experience. It also couples systems together since knowledge that did not have to exist now is critical for the success of components communicating with each other. Additionally this will create product regressions when service B start's calling service C.

We can't validate that new implementation would cause a problem, because likely some of the tokens coming into B are allowed to call C. To prevent production failures, B has to start checking permissions for Service C Before even sending the token to C so that it doesn't cause a failure. So A shouldn't know about Service C and now it would, and to prevent issues in production B has to also check for the C's permissions. Neither of these are good things in a software platform.

5. Creating pits of failure

Token attenuation creates multiple pits of failure. The most important one is a security regression. The premise of attenuation is that we can scope down a token to prevent the systems that receive the token to access systems they shouldn't. However, at inspection time we cannot know that Service B should not call Service C all we know is that B wants to and it can't. The natural thing for the engineering team to do is undo — ensure the token still has the permission, and no longer removing it from the token. That means, the solution to token attenuation is to undo token attenuation. Further, we can start to question why we need an attenuation at all, especially when we can see that the service we are calling is asking for permissions we are taking away.

The major issue with permission attenuation is that the resolution to a request that is Access Denied is to stop attenuating the token. When the solution to a problem requires reversing your security implementation, there is an issue with that implementation.

Token attenuation verdict

Token attenuation is both a security and product problem, and does not solve the problem it sets out to solve at the beginning. What this section has taught us however is that to create a Pit of Success we need a solution that starts with less access and enables us to grant more access when required. So rather than scoping down tokens, we actually want the ability to selectively scope up.

Scoping up has similar problems as scoping down, however it does help resolve one key difference that it rno longer results in the pit of failure. If by default we assume that an access token will be passed around and might end up in a less-trusted system, then we've set a baseline. To overcome the security of of a single service we can increase the restrictions on calling that service.

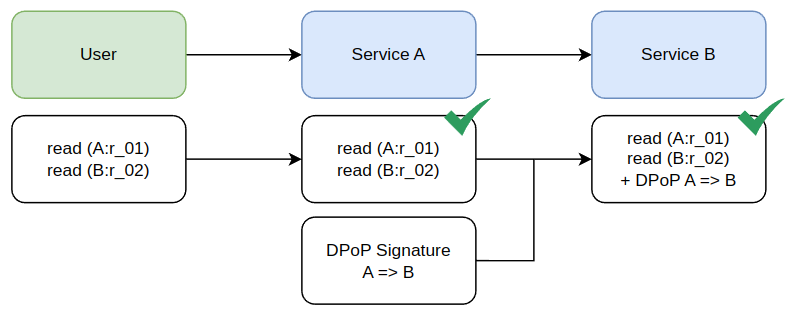

On recommended solution here to scope up is to introduce what is known as OAuth Demonstration of Proof of Possession (DPoP). When needing to enter a higher security context these services can require not only the incoming user access token, but also contain a second signature that represents the machine service caller.

DPoP signatures are scoped both from the calling service A and to the recipient endpoint B (and optionally B:/resource/...). Since B checks the request is for B as well as from A. There is no way for an attacker that receives an access token to use it without a validate DPoP signature. If all your high trust services require this signature, and then the authorization is checked for both the user's access token and the service client's access of that token. We've succeeded in adding a security layer that prevents untrusted services from calling the trusted ones.

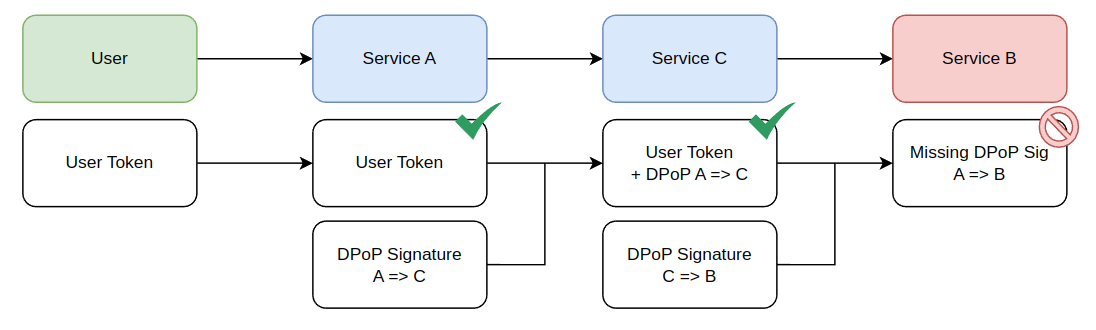

Since the request to Service B requires a valid DPoP signature and service C does not have one, it can't successfully call B.

DPoP edge cases

DPoP works great to solve the permissions problem, however if the permissions are in the token, then it can't be used everywhere. The reason this causes an issue is because service C (Untrusted Service) can create it's own valid signatures and still call service B (the Trusted Service).

To prevent this, an external entity is necessary which enables linking the user's Access Token to the signature itself. If only certain services are allowed to use that access token then even though the untrusted service can generate a signature, that signature would not match the token.

Generating signatures

DPoP signatures can be generated with Authress service clients, using the implementations found in the Authress SDKs. This works because the Service Client Access Keys generated by Authress are private keys that support DPoP signature creation. The explicit steps are:

- Update your services to require DPoP signatures

- Generate a DPoP signature on every request using the Authress SDK and the service client access key

- Send both the user's access token and the DPoP signature with the request

- The receiving service should validate the signature and the user's access token

- Lastly call Authress to authorize the actual request

Conclusion

Token attenuation is a security strategy that helps to reduce the attack surface related to sharing user access tokens with potentially untrusted entities or services. However in practice attenuation creates more problems than it solves, and requires esoteric understanding of the justification for implementation to prevent security regressions. Additionally, using a token attenuation strategy might actually have adverse outcomes on your product's reliability when implemented.

For help understanding this article or how you can implement a solution like this one in your services, feel free to reach out to the Authress development team or follow along in the Authress documentation.